Migrating from Oracle to Snowflake is not just a database upgrade. It is a strategic modernization initiative that requires careful risk management, predictable timelines, and a focus on uninterrupted business operations. For enterprise leaders evaluating an Oracle to Snowflake migration, the ultimate goal is clear: unlock cloud scalability without breaking critical reporting, downstream processes, or BI dashboards.

If you are asking yourself, “should I migrate Oracle data warehouse to Snowflake?”, the answer lies in your current technical debt and future data needs. Many organizations find that legacy on-premise systems simply cannot keep pace with modern analytics demands. Building a strong Oracle to Snowflake migration business case starts with understanding why enterprises are making the move.

The Limits of Legacy Oracle Analytics

Mid-to-large enterprises running Oracle (on-prem, Exadata, or Oracle Cloud) frequently hit operational walls when scaling their data capabilities. Typical pain points include:

- Performance bottlenecks: Mixing OLTP (transactional) and analytics workloads on the same infrastructure causes severe slowdowns.

- Complex capacity planning: Hardware provisioning takes months, limiting agility.

- Costly overhead: High licensing fees, expensive maintenance contracts, and a heavy dependency on highly specialized DBAs drain IT budgets.

- Rigid infrastructure: Limited elasticity makes it difficult and expensive to scale up for peak reporting periods.

What Snowflake Changes

Snowflake entirely shifts the data warehousing paradigm. By moving to the Data Cloud, enterprises gain advantages that traditional Oracle environments cannot match:

- Separation of storage and compute: Scale processing power and storage independently, paying only for what you use.

- Near-infinite concurrency: Run massive queries simultaneously without resource contention or locking.

- Zero-management architecture: Automatic scaling, tuning, and maintenance remove the burden of manual database administration.

- Modern ecosystem integration: Deep compatibility with modern ELT, Change Data Capture (CDC), and advanced analytics tooling.

Typical Enterprise Migration Drivers

The push for an Oracle data warehouse modernization to Snowflake is rarely driven by IT alone. Business leaders champion this transition for several strategic reasons:

- Offloading heavy analytics queries from transactional Oracle systems.

- Preparing infrastructure for advanced AI and machine learning workloads.

- Consolidating siloed reporting databases into a single source of truth.

- Unlocking industry-specific insights, such as Oracle to Snowflake migration for finance analytics, healthcare data compliance, or real-time retail reporting.

The biggest risk in an Oracle to Snowflake migration is not moving tables and rows. The true risk is disrupting business continuity. Breaking critical BI dashboards, regulatory reports, and downstream pipelines can cost millions in downtime and lost productivity. A pure “lift-and-shift” technical move is rarely the right answer.

Instead, success requires a phased, validated migration roadmap. This means validating data continuously, running systems in parallel, and executing controlled cutovers.

This is where a specialized partner comes in. Multishoring has extensive experience guiding regulated, data-intensive enterprises through complex Oracle to Snowflake migrations. By using a structured, low-risk approach, we help organizations navigate the transition safely, ensuring predictable timelines and immediate business value realization.

Need an Oracle to Snowflake migration partner?

We combine strategic advisory with hands-on data engineering to ensure your transition to the Snowflake Data Cloud is secure, predictable, and completely risk-free.

Let’s arrange a low-risk migration assessment for your team.

Let’s arrange a low-risk migration assessment for your team.

Build the Business Case and Migration Strategy

A successful Oracle to Snowflake migration strategy starts long before any data moves. Executive sponsors must define the exact scope, target outcomes, and technical constraints to shift a project from a conceptual idea to a funded, actionable roadmap. Clarifying these elements upfront prevents budget overruns and ensures the business sees value early.

1. Assess Your Current Oracle Estate

Before designing the target architecture, you need a precise inventory of your existing infrastructure. This includes identifying OLTP systems, reporting databases, standalone data warehouses, Oracle Analytics deployments, and downstream BI tools. Whether you are planning an on-prem Oracle to Snowflake migration or mapping out an Oracle Exadata to Snowflake migration strategy, a thorough workload analysis is non-negotiable.

Data engineering teams should leverage Oracle’s native diagnostic tools to profile the environment:

- Analyze query performance: Use AWR (Automatic Workload Repository) reports and performance views like V$SQL and V$SQLAREA to understand query patterns.

- Map constraints: Document strict SLAs, concurrency limits, and peak load requirements.

- Identify a “lighthouse” migration: Do not migrate everything at once. Select a high-value, low-complexity workload – such as a standalone sales or finance data mart – to migrate first. This demonstrates early success and builds organizational confidence.

2. Define Target Outcomes and Success Metrics

A migration without strict success criteria is a major operational risk. Leadership must align on exactly what the new environment needs to deliver to justify the investment.

- Cost Reduction: Calculate expected Oracle licensing savings after Snowflake migration. Moving from fixed, expensive on-premise hardware capacity to Snowflake’s elastic, pay-per-use compute model drastically alters infrastructure costs.

- Performance and Agility: Set baseline metrics for faster BI query execution, higher concurrency for self-service analytics, and shorter time-to-new-data for decision-making.

- Risk and Compliance: Ensure the target Snowflake environment provides an equivalent or superior security posture. Regulated industries must map out data governance, masking policies, and auditability requirements early.

3. Choose a Migration Approach and Phasing

Your methodology directly dictates your Oracle to Snowflake migration timeline and overall project risk. Enterprises generally choose between two paths: a pure “lift-and-shift” (moving schema and data with minimal redesign) or a modernization approach (re-modeling data, building ELT pipelines, and deprecating legacy PL/SQL-heavy logic).

To minimize business disruption, utilize phased rollouts:

- Domain-by-domain cutovers: Migrate specific business units sequentially (e.g., marketing first, then finance).

- Hybrid architecture: Implement read-only replication from Oracle while gradually repointing BI workloads to Snowflake.

- Zero or minimal downtime: Run Oracle and Snowflake in parallel. By utilizing Change Data Capture (CDC) replication, you can keep systems synchronized until you are ready for a controlled, validated cutover.

4. Accelerate Strategy with Multishoring

Defining a clear Oracle to Snowflake migration ROI requires accurate baseline data and proven cost-modeling. Multishoring facilitates this entire strategy phase. We provide structured technical assessments, detailed TCO/ROI modeling, and a standardized migration framework. By partnering with specialists early, enterprises establish a de-risked foundation before a single line of code is translated.

Our Data Consulting Services You Might Find Interesting

Design a De-risked Architecture and Migration Plan

Transitioning from strategy to execution requires a rock-solid technical blueprint. A well-documented Oracle to Snowflake migration architecture dictates the tools, security posture, and timeline needed to move workloads without disrupting business operations. The goal is to design a target state and a data movement plan with strict risk controls built in.

1. Target Architecture Patterns

Your target architecture must securely connect your existing environment to Snowflake.

- Standard landing zones: Extract data from Oracle, stage it in cloud object storage (AWS S3, Azure Blob, or Google Cloud Storage), move it to a Snowflake external stage, and finally load it into Snowflake core schemas.

- Connectivity and security: Secure the perimeter using network peering or VPNs, private endpoints, key-based authentication, and strict role-based access control (RBAC).

- Hybrid scenarios: Many enterprises adopt an Oracle to Snowflake hybrid migration architecture. In this model, Oracle continues to run OLTP workloads while Snowflake takes over as the primary analytics platform, fed by near real-time replication.

2. Choosing Data Movement Patterns and Tools

How you move your data impacts your Oracle to Snowflake migration timeline and system downtime.

- Batch vs. Continuous: Manual exports (like Oracle Data Pump) and generic ETL tools (Informatica, ODI, Talend) work for one-time historical batch loads. However, modern ELT platforms (Fivetran, Airbyte, AWS DMS, Striim) are better suited for ongoing syncs.

- CDC replication: Implementing Oracle to Snowflake real time replication with cdc (Change Data Capture) is the gold standard. By utilizing LogMiner or redo log-based capture, you can track incremental changes. This approach is the foundation for an Oracle to Snowflake zero downtime migration.

3. Object and Code Conversion Strategy

Oracle relies heavily on proprietary features and procedural logic that do not translate 1:1 to Snowflake.

- Object inventory: Systematically map out schemas, tables, views, materialized views, constraints, sequences, partitioned tables, and LOBs.

- SQL translation: Map Oracle functions to Snowflake equivalents (e.g., translating SYSDATE to CURRENT_TIMESTAMP, or NVL to IFNULL).

- Refactoring PL/SQL: Oracle packages and stored procedures must be handled carefully. You can rewrite them into Snowflake Scripting, externalize the logic into dbt, or encapsulate them in application logic. Automated code-translation accelerators (like SnowConvert or AI-assisted tools from Datafold) drastically speed up this process.

- Handling Oracle specifics: Replace Oracle materialized views with Snowflake streams and tasks (or standard views with scheduled refreshes). Shift away from manual Oracle partitioning and rely on Snowflake’s automatic micro-partitions and clustering.

4. Data Model and Governance Alignment

Do not just lift and shift your data model. Revisit dimensional models, slowly changing dimensions (SCDs), and aggregation strategies to take advantage of Snowflake’s compute elasticity. Incorporate enterprise data governance from day one – implement role hierarchies, dynamic data masking, row access policies, and clear data lineage tracking to meet compliance audits.

5. Project Planning and Risk Controls

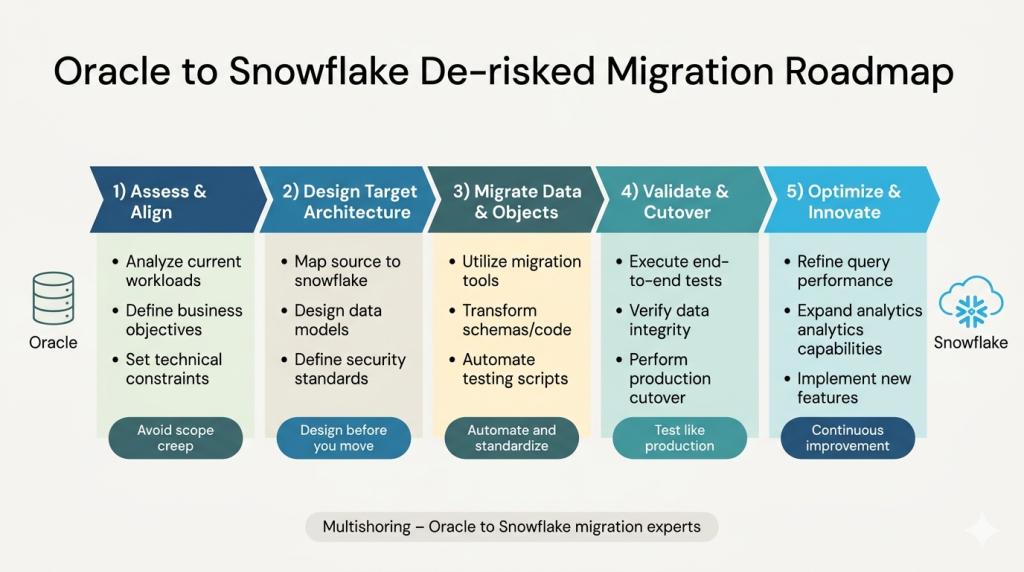

An effective Oracle to Snowflake phased migration strategy aligns with Snowflake’s own nine-phase Oracle to Snowflake migration framework: assessment, design, pilot, data migration, validation, performance optimization, BI repointing, cutover, and decommissioning.

To enforce risk control, schedule non-production dry runs, establish parallel run windows, require sign-offs per business domain, and define strict fall-back criteria. Multishoring accelerates this phase by providing standard migration checklists, runbooks, and reusable architecture patterns.

The infographic below summarizes this de-risked Oracle-to-Snowflake migration roadmap, from initial assessment to post-migration optimization.

Execute the Migration Safely – Data, Code, and Workloads

Execution is where migration theories meet operational reality. To execute an Oracle to Snowflake transition safely, you must treat testing, validation, and risk mitigation as first-class citizens. The goal is a controlled, rehearsed cutover with zero surprises.

Below is a practitioner-friendly playbook for managing data movement, refactoring code, and handling the technical nuances of the switch.

Phase 1: The Data and Schema Foundation

Before moving live data, you must establish the structural groundwork. To successfully migrate Oracle schema to Snowflake, rely on automation wherever possible, reserving manual engineering for complex edge cases.

- Extract and Convert DDL: Query Oracle data dictionary views (DBA_OBJECTS, DBA_TABLES, DBA_SOURCE) to export your definitions. Pass these through automated conversion tools to generate Snowflake-compatible DDL scripts.

- Accelerate Historical Loads: Extract historical data using Data Pump, SQL*Plus, or existing ETL tools, and land it in cloud storage. Load this data into Snowflake using the COPY INTO command. Pro tip: Provision temporary X-Large or 2X-Large Snowflake virtual warehouses to drastically accelerate these initial bulk loads, then scale them back down.

- Enable Continuous Sync: Once the historical baseline is set, configure your CDC-based replication or incremental extract jobs. This keeps Oracle and Snowflake in perfect sync during the transition window.

Phase 2: Handling Special Oracle Objects (Mapping Guide)

Oracle relies heavily on specific data types and index structures that require a different approach in a cloud-native environment. Use this mapping strategy for complex objects:

| Oracle Concept | Snowflake Execution Strategy |

|---|---|

| CLOB / BLOB Data | For Oracle CLOB BLOB migration to Snowflake, map to VARCHAR or VARIANT. Use batching strategies and compression for large tables, and pay close attention to date/time zone mapping nuances. |

| Sequences & Keys | Map directly to Snowflake sequences or IDENTITY columns. In some cases, redesigning surrogate key strategies for distributed cloud architecture yields better performance. |

| Indexes & Constraints | Snowflake does not use traditional indexes. Drop them and rely on Snowflake’s automatic micro-partitions and clustering. Enforce Primary/Foreign Key metadata for documentation and rely on your ELT pipeline for actual data integrity enforcement. |

Phase 3: Refactor Procedural Logic and Pipelines

Migrating legacy code is often the most resource-intensive step. A direct Oracle PL/SQL to Snowflake Scripting conversion is possible, but this is also the perfect time to modernize your pipelines.

Instead of replicating legacy behavior line-by-line, evaluate when to redesign logic. Many data teams choose to externalize procedural logic into dbt models or re-implement it within modern orchestration tools.

Simultaneously, turn legacy ETL into ELT. Land raw data directly into Snowflake and perform transformations using the cloud’s compute power. Replace or update legacy tools (like ODI or custom scripts) with managed pipeline solutions like Fivetran, Airbyte, Matillion, or AWS DMS, which offer built-in CDC and schema evolution.

Phase 4: The Validation Checklist

If you are wondering how to validate Oracle to Snowflake migration efforts, the answer is rigorous, automated testing. Never rely on manual spot-checks. Add these steps to your Oracle to Snowflake migration checklist:

- Automated Data Reconciliation: Run strict record counts, checksums, and column-level comparisons between Oracle and Snowflake. Utilize specialized tools (such as Datafold) for automated validation and anomaly detection.

- Query and Performance Benchmarking: Run representative analytical workloads on both platforms. Compare the results and runtimes, then tune Snowflake virtual warehouses, data clustering, and caching accordingly to ensure parity or improvement.

- UAT and Business Sign-off: Involve business users early to validate critical reports. Require formal sign-off for finance, regulatory, and executive dashboards before initiating the final cutover.

Phase 5: Cutover and Decommissioning

The final step is flipping the switch. Depending on your risk tolerance, choose between a “big bang” cutover or a phased domain-by-domain approach. Execute a final incremental sync, place the Oracle database into a read-only window, and repoint your BI tools. Communication to stakeholders during this window must be crystal clear. In the weeks following go-live, aggressively monitor load jobs, query performance, and infrastructure costs.

Do not pull the plug on Oracle immediately. Keep it in read-only mode as a fallback until you have clear evidence that Snowflake fully meets all SLAs. Once proven, safely decommission Oracle to finally unlock your licensing savings.

Multishoring actively supports this critical window by running rehearsed dry-run cutovers and providing dedicated on-call support across the entire go-live period.

Optimize Snowflake Post-Migration and Realize ROI

The work does not stop once your data successfully lands in the cloud. To fully realize your Oracle to Snowflake migration ROI, you must actively govern and tune the new environment. Without proper cost controls and resource management, cloud elasticity can quickly lead to unexpected spikes in spend.

Understanding how to optimize Snowflake after Oracle migration ensures you transition from merely operating in the cloud to actually maximizing its value. Below is a strategic matrix detailing the four key pillars of post-migration optimization.

The Post-Migration Optimization Matrix

| Optimization Pillar | Strategic Actions | Expected Business Value |

|---|---|---|

| 1. Performance & Cost Control | • Right-size virtual warehouses and pair them directly to specific workloads. • Enable auto-suspend and auto-resume. • Isolate ELT, BI, and data science workloads into dedicated warehouses. • Optimize data layout by clustering heavy tables, pruning cold data into cheaper storage layers, and leveraging result caching. • Set up usage dashboards and alerts for runaway queries. | Eliminates resource contention, ensures predictable billing, and prevents cost anomalies. |

| 2. Data Governance & Security | • Implement strict Role-Based Access Control (RBAC). • Apply row-level security and dynamic data masking policies based on data classification. • Ensure comprehensive logging and auditing to meet or exceed the compliance standards previously maintained in Oracle (especially critical for healthcare, finance, and public sector). | Guarantees enterprise-grade security and simplifies regulatory compliance audits. |

| 3. Ecosystem & BI Enablement | • Execute seamless Oracle to Snowflake BI repointing by routing tools like Tableau, Power BI, Qlik, and Oracle Analytics to Snowflake via optimized gateways and drivers. • Introduce modern analytics stack patterns to replace legacy processes. • Adopt dbt for in-database transformations and implement reverse ETL to push curated data back to operational apps. | Accelerates the timeline for Oracle to Snowflake ETL modernization and empowers business users with new self-service capabilities. |

| 4. Quantifying ROI | • Document the hard reduction in Oracle licensing, maintenance, and infrastructure costs. • Track the decrease in system outages and time saved for data engineering teams through automated maintenance. • Measure the improvement in report generation speed. | Provides executive stakeholders with clear, measurable proof of the migration’s financial and operational success. |

Communicating Success and Planning the Next Phase

Do not keep your migration wins a secret. Data leadership should actively publish internal success stories and performance metrics 3 to 12 months after the cutover. Highlighting faster query times and reduced infrastructure costs helps secure buy-in for future data initiatives.

The end of the migration is the beginning of your modern data lifecycle. Multishoring continues to add value long after the initial cutover. As a long-term Snowflake partner, we provide ongoing warehouse tuning, assist with onboarding new business domains, and help your teams build advanced analytics and machine learning use cases on top of your newly migrated platform.

Conclusion

Moving from Oracle to Snowflake is far more than a simple bulk data copy; it is a comprehensive, multi-phase transformation that elevates your architecture, governance, internal processes, and people. To truly de-risk this enterprise modernization, organizations must rely on proven strategies rather than hope. By committing to careful upfront assessments, phased rollouts, CDC-based data synchronization, rigorous automated validation, and thoroughly rehearsed cutovers, you protect critical business operations while unlocking the boundless scalability of the Data Cloud.

Navigating this complexity does not mean your internal IT and data teams have to shoulder the burden alone. Multishoring brings deep, specialized expertise in both legacy Oracle environments and modern Snowflake architectures to actively guide your journey. We accelerate your timeline by providing strategic advisory alongside hands-on engineering, utilizing our proven migration accelerators – including pre-built templates, automated conversion scripts, and validated architecture patterns – to ensure a smooth, predictable transition.

The fastest way to build your business case and define a secure path forward is to start with a clear, objective baseline.